As the second post of the series and representing another nostalgic trip down memory lane, this post is dedicated to the first robotic vision system that I ever built.

This project came about as part of a Digital Image Processing class I took in the Spring of 2004. The course was taught by Dr. Oge Marques (Blog – Twitter) as part of the curriculum for the Computer Engineering degree I was pursuing. I mention this because Dr. Marques had an incredibly positive impact on my academic career by encouraging me to be creative, went out of his way to provide a fantastic environment to learn and has been a great teacher, mentor and friend.

The Project

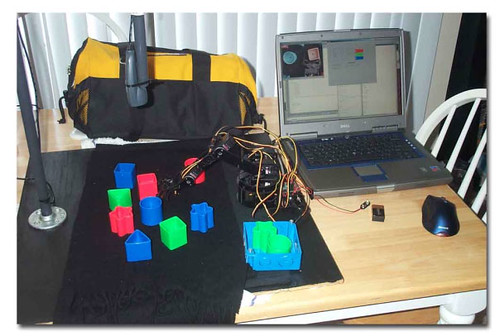

Part of the coursework involved proposing an image processing project which would have to be implemented by the end of the course. For whatever reason, I thought it would be interesting to incorporate a robotic Lynxmotion (http://www.lynxmotion.com) arm into the image processing project. Mostly, this was just an excuse to buy a robot arm. It was decided the project would consist of a robot arm that could use a USB web camera to analyze objects in a fixed area on a table and then retrieve one of them based on user input.

Part of the coursework involved proposing an image processing project which would have to be implemented by the end of the course. For whatever reason, I thought it would be interesting to incorporate a robotic Lynxmotion (http://www.lynxmotion.com) arm into the image processing project. Mostly, this was just an excuse to buy a robot arm. It was decided the project would consist of a robot arm that could use a USB web camera to analyze objects in a fixed area on a table and then retrieve one of them based on user input.

The objects to be analyzed came from a Fisher-Price sorter box, you know the one you had as a kid where you would put the square piece of plastic in the top of the box and it would fall inside, or you would try to see if the star would fit into the triangular hole sideways or if there was some amount of force you could apply which would deform the plastic object just enough to slide through, which it never did.

The objects to be analyzed came from a Fisher-Price sorter box, you know the one you had as a kid where you would put the square piece of plastic in the top of the box and it would fall inside, or you would try to see if the star would fit into the triangular hole sideways or if there was some amount of force you could apply which would deform the plastic object just enough to slide through, which it never did.

The Solution

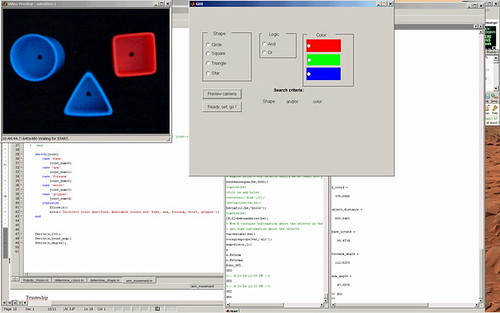

Once the project had been defined, it was time to design the software and start building the whole thing. MATLAB was used as the development environment (this also included the MATLAB Image Acquisition and Image Processing toolboxes). There were two main implementation challenges: (1) How to mathematically describe and identify an object based on the shape of the object and (2) How to determine object position relative to the robot arm and move the arm to retrieve the object.

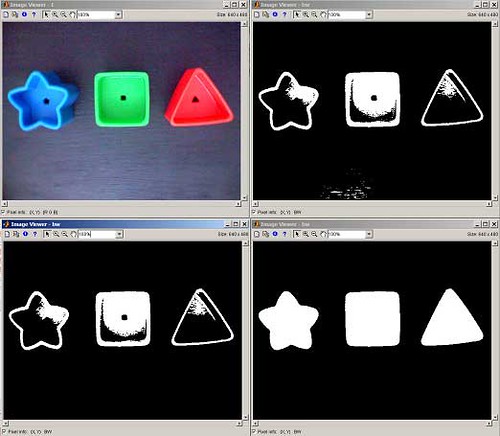

The first of these challenges was tackled when Dr. Marques suggested using a MATLAB image processing library by Gonzalez and Woods (http://www.imageprocessingplace.com/) to calculate boundary signatures of an object. This involved taking an image frame and doing a bit of preprocessing as seen in the image below. Specifically, this included applying a threshold to binarize the image, removing spurious objects and then back-filling the remaining objects.

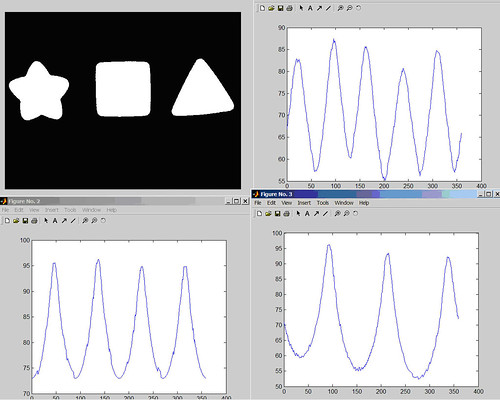

Once the image was cleaned up, the frame could be parsed by the signature library which would produce a data set representing the distance from the center of the object to the boundary. As you can see, the unique characteristic is the number of peaks in each graph which represent the number of corners an object had. The graph of the star has 5 peaks, square has 4, triangle has 3 and the circle has a flat graph (not pictured below). At this point, a horizontal line could be placed across the vertical midpoint of the graph and we can count how many times the graph’s line passes through that. Divide that number by two and you have the number of peaks (or # of corners).

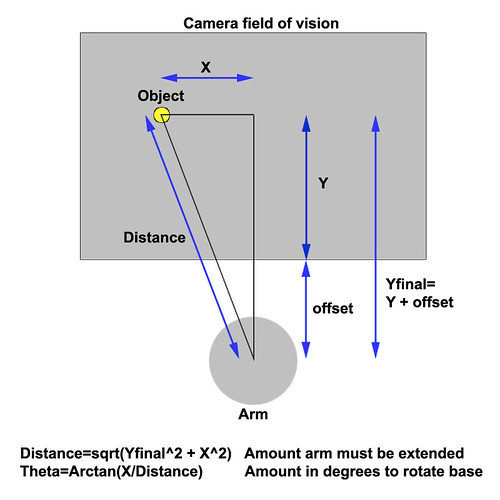

At this point, it was not too difficult to differentiate between a red square or a blue circle for example, but the system still needed to know how to actually command the robot arm to retrieve the object. A few simple geometric calculations later and the solution was at hand as seen in the image below.

With all of that sorted out, a user could walk up to the system, input selection criteria (shape and/or color) and the MATLAB program would find the object of interest, calculate its location, send the robot movement commands to the arm over a serial port and retrieve the object.

The question is did it work? Yes, it worked brilliantly! You can see a video of the system in action on YouTube here:

Update 12/29/2012 – just found the original video recording of the presentation that I did in class for this project! Bonus!

Pingback: House of Engineering Funk – Help! My air hockey table grew a robot arm!